People call everything they dislike generic output, but the label gets more useful when you look at what causes it. Most of the time the problem is not the existence of the model. The problem is a workflow that rewards speed over taste, specificity, and continuity.

If you want better output, you need stronger reference logic, tighter approvals, and a clearer definition of what the work is supposed to feel like before the model starts improvising.

Slop starts when the brief is doing no real work

A vague prompt produces vaguely competent output. That is often enough to look finished at a glance and disappointing on a second look.

The brief needs to communicate visual intent, narrative role, texture, restraint, and what absolutely should not appear. Without that, the model defaults to the most average interpretation of the ask.

Taste has to be externalized into constraints

Creative teams usually know when something feels cheap, oversharpened, or generic, but they often do not convert those instincts into reusable constraints.

Once taste is written down as style guidance and negative rules, the system gets dramatically more stable. The model stops guessing what you mean by premium, cinematic, literary, restrained, or atmospheric.

- Write down what the work should feel like.

- Write down what cheapens the look.

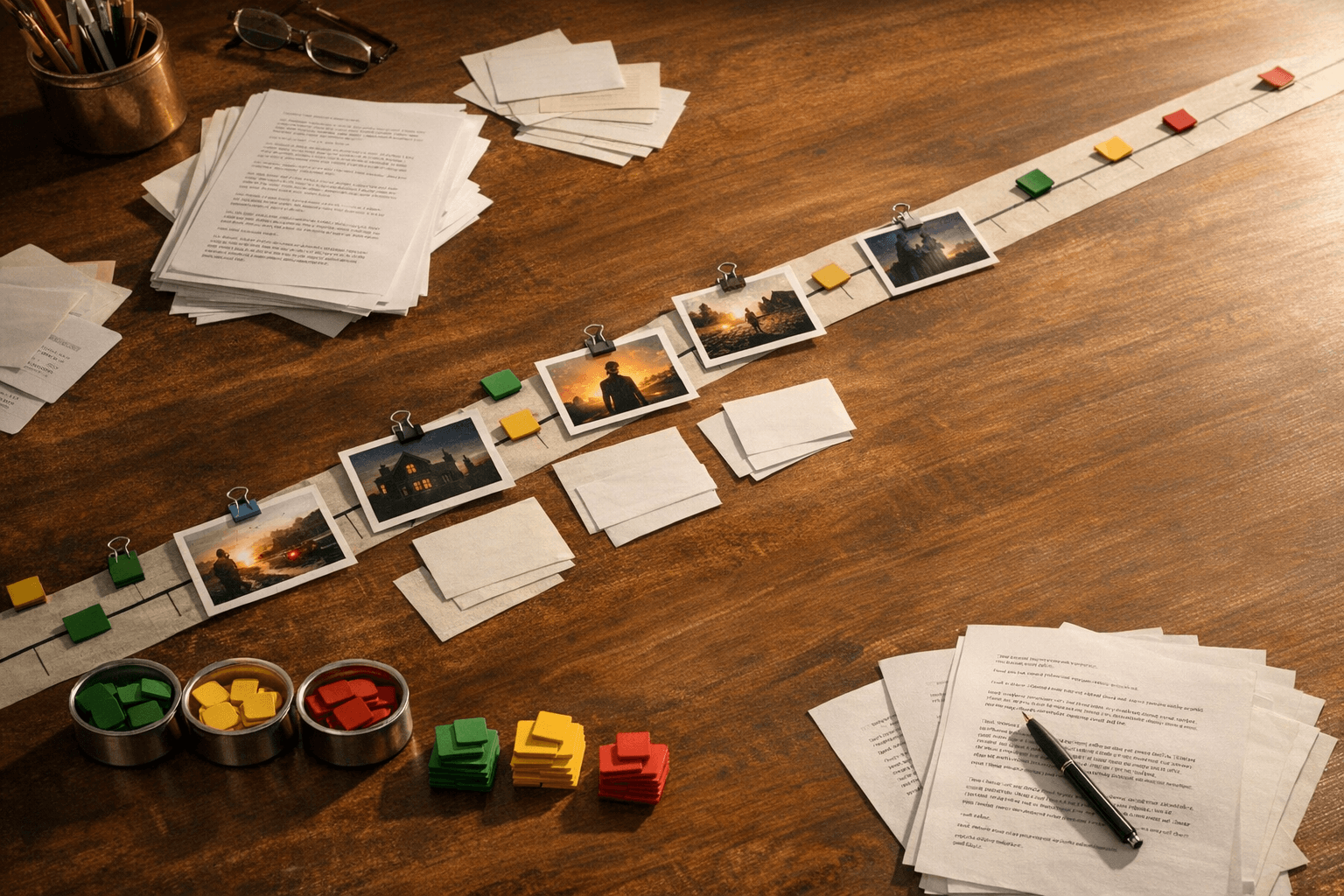

- Save the prompts and references that consistently produce the right register.

Canon beats novelty when the work has to compound

A one-off image can survive on novelty. A production system cannot. The more assets you generate, the more important it becomes that approved work compounds instead of scattering.

Canon makes quality repeatable. It gives the room a standard for what a character, set, or prop should look like when the model is asked to expand on it later.

Approval should be editorial, not impulsive

Teams often approve what feels most immediately dramatic, then regret it when it fails to match adjacent scenes. Editorial approval is slower in the moment and faster in the long run because it favors coherence over instant spectacle.

That means judging output against the story system, not just against the dopamine hit of a single frame.

The fix is a better loop, not a longer prompt

Long prompts can still produce slop if the references are weak and the team has no memory. The real fix is a loop: define canon, generate, review against constraints, save provenance, and feed approved work back into the system.

Once that loop exists, quality rises because the room is making cumulative decisions instead of starting from zero every time.