Most teams try to get consistency from prompt wording alone. That works for one or two generations, then breaks the moment the scene, camera angle, or lighting changes.

Consistent image generation comes from treating the subject like canon. The model needs stable references, tracked state, and a memory of which prompts created the approved look in the first place.

Canonize the subject before you chase variants

If you want a character, object, or environment to stay recognizable, lock down a canonical reference set before you explore dramatic variations. That reference should capture identity, silhouette, palette, and surface details.

Without a canonical anchor, every new prompt asks the model to reinvent the subject from a partial description. The result is drift disguised as creativity.

Separate identity from scene direction

The most stable workflows keep two layers of information: what the subject always is, and what changes for this specific image. Identity should not have to be restated from scratch every time you change the lens, weather, or action.

Once those layers are separated, the room can vary staging without losing the core visual truth.

- Identity layer: face, costume logic, body shape, key props, material language

- Scene layer: location, camera, action, lighting, emotional tone

- Output layer: aspect ratio, crop, detail priority, final use in product or marketing

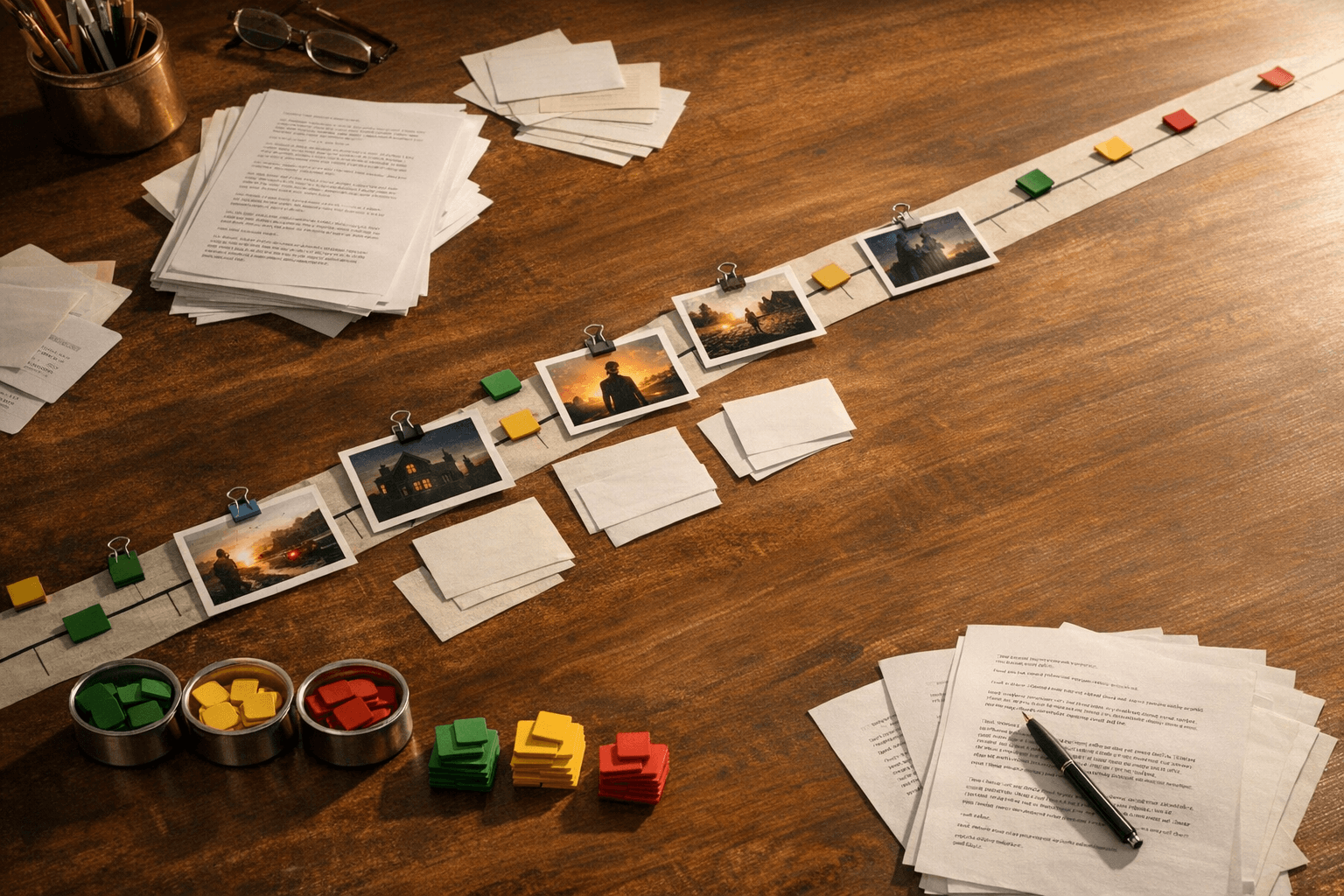

Track prompt provenance for every approved image

Teams often save the image and lose the reasoning that produced it. That means the next person trying to make a companion shot has to reverse-engineer a look from pixels alone.

Prompt provenance turns a good image into a reusable production asset. It tells you which instructions mattered, which constraints were stable, and what should carry forward into the next generation.

Use state, not just mood boards

Mood boards are useful, but they do not capture what has changed in the story. If a coat is torn, a crown is missing, or a location has burned, the approved image set has to reflect that state change clearly.

This is where visual continuity starts to overlap with narrative continuity. The image system should know not just who the subject is, but what condition they are in right now.

Treat approvals as canon, not favorites

A beautiful image is not always the right image. The approved frame should be the one that best represents the canon you want the rest of the system to inherit.

When teams choose on taste alone without recording why, consistency breaks the moment they need a second angle, a revised scene, or a video continuation.